Utilities for Handling Disk Fragmentation in Linux

Disk fragmentation can be an issue even for Linux servers. We look at some utilities that can resolve fragmentation issues.

I was reading an article the other night related to optimizing data on your disk, in which the author talked about file and disk fragmentation even on Linux. This got me wondering, how much fragmentation is there on the Linux server where, as a system administrator, I constantly load and unload applications and do much of my testing?

What is Fragmentation?

First, let me explain how files become fragmented. In short, a hard drive has a number of sectors on it, each of which can contain data. Files, particularly large ones, must be stored across a number of different sectors. Let’s say you save a number of different files on your disk. Each of these files will be stored in contiguous sectors on a clean disk. Later, if you update one of the files you originally saved, increasing the file’s size, the file system will attempt to store the new parts of the file right next to the original parts. Unfortunately, if there’s not enough uninterrupted space, the file must be split into multiple parts. In the Linux ext4 file system, this is referred to as “extents”. When your hard disk reads the data, its heads must skip around between different physical locations on the hard drive to read each extent, and this can have an impact on performance, especially if you have large files with many extents.

Defragmentation

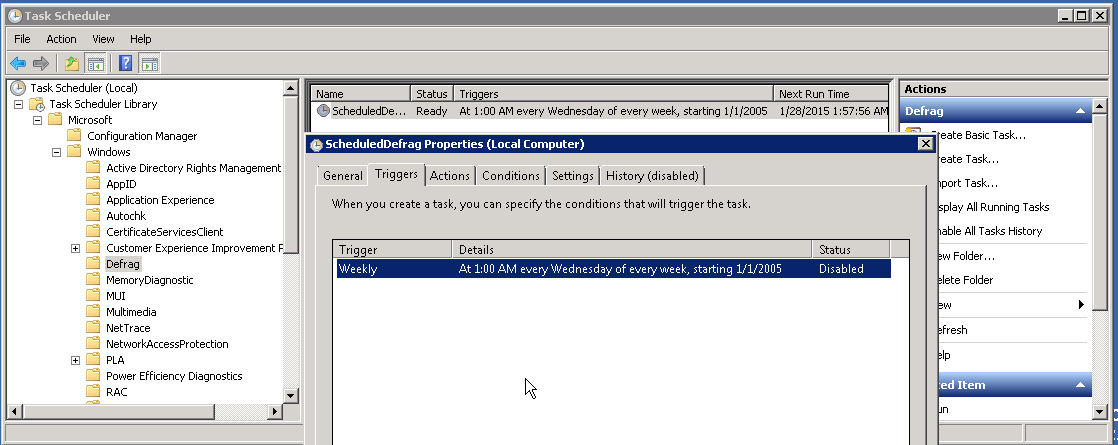

Performing disk defragmentation (or “defragging”) will reorganize the data on a hard disk, allowing it to operate more efficiently. File systems like NTFS have some built in buffers of free space that can reduce fragmentation issues, but files can and do still become fragmented. Starting with Windows 2008, Microsoft has included an automatic weekly Defrag in its scheduler, but it needs to be enabled manually:

Defragging in Linux

The Linux ext4 file system handles this slightly differently, by scattering files across the disk, leaving larger spaces between files. This typically would solve the contiguous space issue, but for larger files, fragmentation can still become an issue, especially if you have limited space for the system to move files around. So what is the best solution for handling defragmentation in Linux?

I’ll also mention that when it comes to using tools like fsck (a utility which stands for “file system consistency check”) to examine the consistency of a file system, you have to un-mount the volume, which can cause issues with a system’s availability. This is why I wanted to find a utility that would work around that type of limitation, especially in a situation when I want to make sure to reduce any possible down time that would by caused by rebooting a server to have fsck do its check.

As a solution to this issue, I came across a rather simple command called “filefrag” that is included in a package called e2fsprogs. Now, keep in mind that you’re more than likely to have file fragmentation on larger files, and the smaller ones will not be as fragmented or impact your system as much. To use the command, I first searched for the 20 largest files on my systems:

I then simply type:

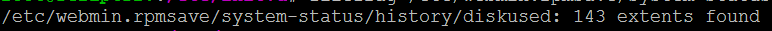

The command returned the number of extents found on that particular file. After checking several files, I could see that a few of them were well over 100 extents, which basically means that the files were fragmented into 100+ parts rather than being in one contiguous extent.

So how do you check and defrag your disk without un-mounting the partition? It turns out to be surprisingly easy with a simple utility called “defragfs” (which is actually a Perl script). To run this utility, I downloaded the file, unzipped it, and copied it to my /usr/bin/ folder, then changed the permissions on the file so I can execute the script. I then proceeded to see how fragmented my system was by typing:

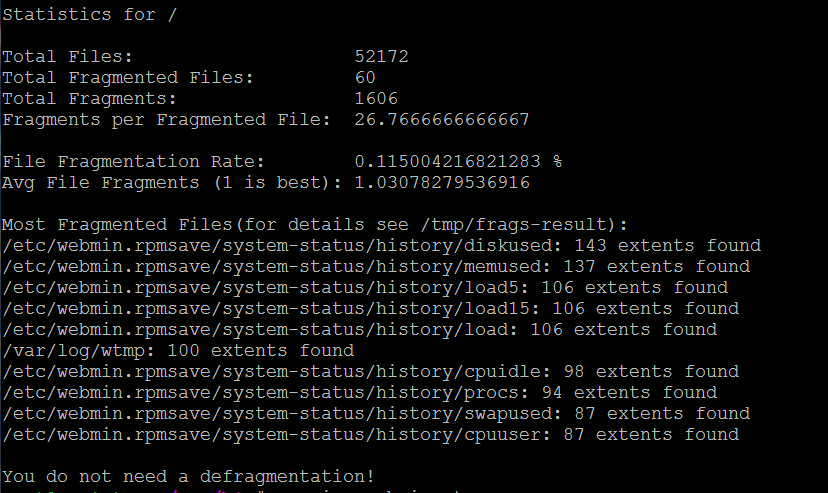

Without any options on the command line, the script simply checks all of the files on the drive and lists the files with multiple extents, using the filefrag command in the background.

In my example, the command will scan the entire disk from the root folder, although I could have easily changed it to any directory in order to limit which the check to a smaller portion of the drive. Here is a sample output from the results on one of test servers. Notice that the number of extents on the first few files are well over 100:

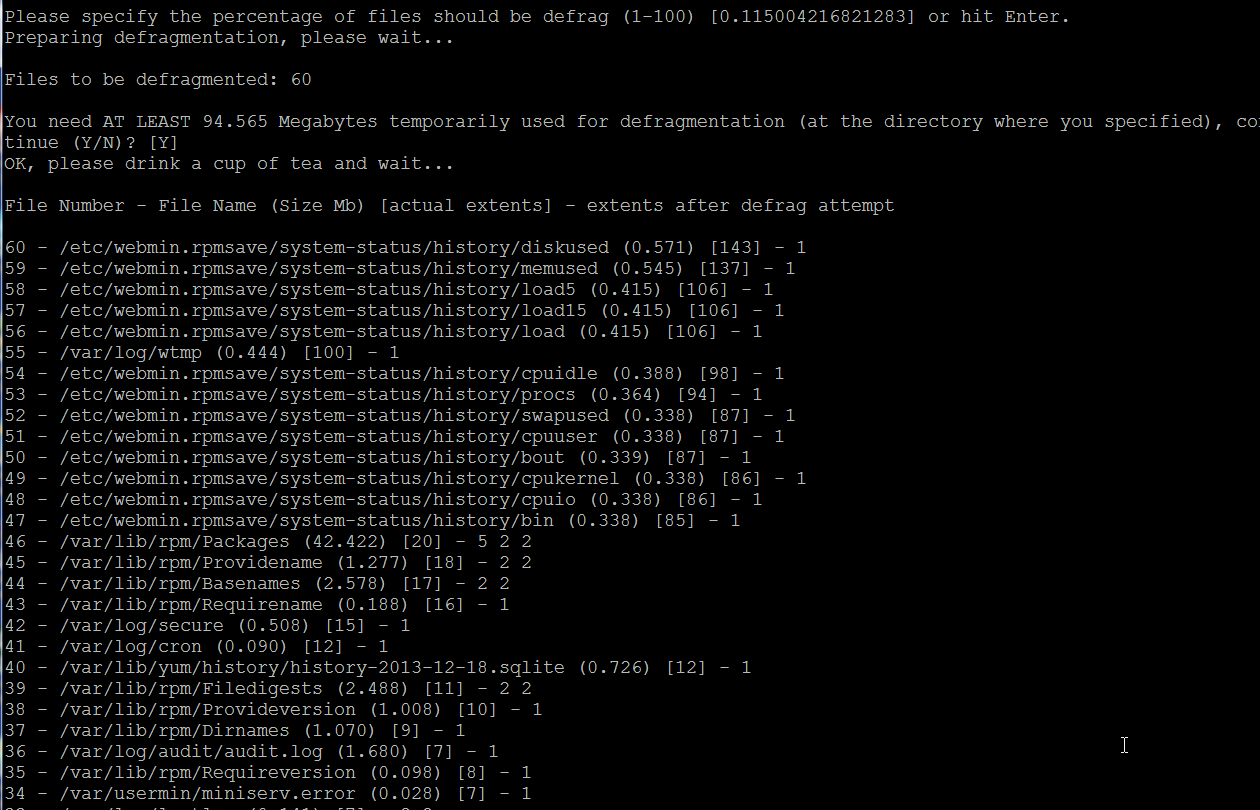

Now let’s run “defragfs” again, this time using the “-af” option, which is basically telling the script to automatically fix fragmented files:

When the command runs, you will see a list of files scroll by, showing you how many extents the file had shown in brackets and how many extents it has after the fix. Notice all the files with over 100 extents now show as having 1 contiguous extent:

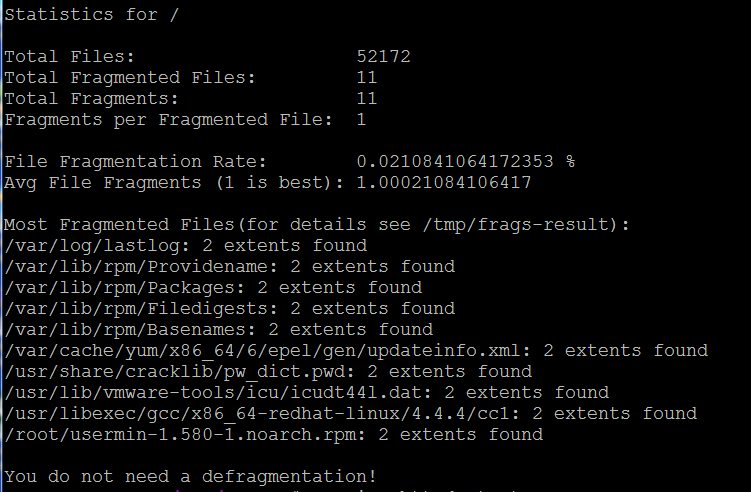

If you run the command a third time with no options, you will see that the end result has cleaned up most of the files:

Keep in mind that you may not be able to get all files defragged, due to any programs that might be accessing the files at the time, or any disk space issues. The end result of running this utility is that I was able to defrag my volume without taking the server offline or rebooting.

If you really want to get this number even lower, you will need to stop your applications or services that are writing to the disk or are accessing any files at the time you attempt to defrag them. In order to ensure minimal fragmentation and achieve the best efficiency on your Linux server, I would recommend creating a Cron job to schedule a weekly defrag of your drive or, at a minimum, certain folders.

Before looking into this topic, I had never really given defragmentation on Linux much thought, but as you can see from the examples above, Linux OS files do get fragmented, and at some point could impact your server’s performance. I’m glad I’ve found this gem to add to my toolbox, and I hope it can help you as well. If you have any questions about Linux file systems, fragmentation, or server management, please contact us to speak to a Systems Engineer, or share any other questions or comments you might have below.